The Fundamentals for All Test Team Leads

This handbook explains the basic concepts surrounding the uTest Test Team Lead role.

Developed by uTest Community Management – 2/11/2014

Introduction

The term TTL is a uTest-derived acronym that stands for ‘Test Team Lead.’ TTLs are hand-selected members of the uTest community who work side-by-side with uTest Project Managers (PMs) to facilitate the flow and outcome of test cycles. A few traits and required skills of good TTLs include:

- Hard skills: high-quality testing output with proven track record, attention to details, expertise managing test cycles based on customer requirements across multiple technologies, Experience with uTest and an understanding of test cycle operations, and device/industry specific expertise

- Soft skills: excellent communication skills, professionalism, culturally sensitive, fair and helpful

- Sense of urgency: attentive to test cycle details and activity, focuses on responsiveness, escalates issues accordingly to the PM or CM, constructive not confrontational

- Business acumen: understands what’s important to the customer with the ability to analyze and communicate the information across the testing community when necessary

Although the tasks required per test cycle may differ, the goal is the same: to increase the value that uTest brings to the customer by maximizing the output and minimizing the noise level.

Distribution of TTL Work

A TTL is vetted and trained before being placed onto paid projects. Paid projects are assigned by a PM. TTL payouts are always defined upfront, before any work is performed, and are kept between the TTL and the PM. Each assignment is different and depends on many factors, such as the TTL’s ability to triage bug reports or their ability to create test cases, to name two. Other criteria may include the TTL’s experience in a particular testing domain, industry, or mobile platform and access to those platforms to reproduce bugs. TTLs should utilize the TTL Availability Google Doc to enter your weekly availability and project preferences. Request access through the link provided.

Details of TTL Assignments

For many test cycles, the TTL’s main responsibilities are to monitor tester questions via test cycle chat and triage reports as they come in. TTLs should be in regular contact with the PM to ensure that the test cycle is headed in the right direction and that the customer is delighted with the results. Below are details of the responsibilities TTLs may be asked to perform:

- Platform Communications with Testers and Customers

No matter the type of communication, keep in mind that testers must follow the uTester Code of Conduct at all times. TTLs should monitor the chat as closely as possible in order to address incoming questions and deliver outgoing announcements, especially in the first few hours that the test cycle launches.- Chat: Test cycles typically have real-time chat enabled, which allows TTLs to communicate with testers, customers, and project managers in real time. There are three components of chat: Questions about Scope, Announcements and Threaded/Topic Conversations.

- Questions on Scope – for general questions on the cycle. This is usually related to scope questions, but can contain other general questions about the cycle such as questions on difficulty installing the build or claiming a test case.

- Announcements – where TTLs should place their welcome message to the testers (see example welcome message in the addendum). Also, this is where the TTL would make any other announcements such as: announcing a new build, announcing a new out of scope section, making a correction to the information in the overview, reminding testers about the cycle close time, encouraging testers to get test cases submitted, refocusing the team on the focus areas.

- Topic Conversations – discussions isolated to a specific topic. If there is a major development that the TTL thinks should have more attention than just an announcement, consider starting a new thread. These Topics can garner more attention because the TTL can edit the title. Threaded conversations are also good for topics like test case claims. Using these topics can keep the Questions about Scope section a bit cleaner.

NOTE: All chat activities can result in an email sent to the testers and the customer depending on their settings, so please be mindful of the noise that chat can create.

- Tester Messenger: Notes that TTLs add to Tester Messenger will result in an email directly to the tester who reported the issue and is added to the defect report for easy reference. This message is visible to the customer, PM, and any other testers on the test cycle. TTLs should use the Tester Messenger when:

- There is a question about the defect that requires an answer from the reporting tester.

- The testers will sometimes leave a message here for the TTL or the customer when the TTL, PM or customer leaves them a message in the tester messenger. Be aware that testers cannot initiate that exchange. In addition, the TTL receives an email any time a message was added to a bug that they have commented on. When testers leave a message for the customer, the customer should have visibility and may respond, but if historically they are not that responsive, the TTL can email the Project Manager, relaying the question, then respond to the tester in the Tester Messenger.

NOTE: Be careful about exposing the TM’s contact information to the tester if responding to the tester via email (say, forwarding the response). The TTL is never to give out the TM information unless expressly asked to do so by the PM. If so, please send the information to the tester privately through email or chat to keep that information out of the tester messenger where it could be seen by other testers. It is preferred for uTest to be the go-between rather than to start opening doors between the testers and TMs. - If the question and answer are determined to be valuable to other testers by the TTL, share this information on the Chat/Scope & Instructions with all the testers.

- Request More Info: If there is missing information or more clarification is required from a tester, the TTL can push a bug report to the “Request More Info” status, which shows that the bug report was reviewed but requires a response from the tester. Be aware that CM recommends using this status only for small portions of missing information. For a bug that has more significant issues, for example it does not follow the customer’s bug template, is clearly a placeholder, or is just poorly written, the bug should be rejected as “Did Not Follow Instructions.” TTLs are encouraged to assist the overall community in elevating the expectations of testers and eliminating consistently poor bug documentation performance by rejecting bugs that meet those criteria.

NOTE: Sending reports into the “Request More Info” status automatically adds a note to the Tester Messenger and prevents testers from discarding these bugs. However, it is possible to visually note when a tester has responded through the Platform UI and through email notification as the status of the bug returns to “New”. In addition, this status assists the testers when they see their bug change status. Testers should be reminded to press “Send Requested Info” button. Please note that if the tester does not respond before the cycle closes, then their bug will be automatically rejected. - Customer Notes: Notes that you add to Customer Notes sends an email to the customer’s Test Manager and makes a copy of the message in the defect report for easy reference. This message is visible to the customer, PM, and any TTLs on the test cycle who have TTL permissions (but not to testers). TTLs should use the Customer Messenger when:

- The defect needs to be exported to the customer’s tracking system

- The defect has been exported to the customer’s tracking system and the TTL needs to leave a note to identify the corresponding defect ID# in the other system.

NOTE: The key to keeping track of what needs to be exported versus what has already been exported is to be consistent. For example, the TTL leaves a Customer Note only when the defect has been exported to the customer’s tracking system. Then in that note, simply enter the corresponding defect ID# from the other system. If all of the defects will not be exported to the customer’s tracking system, or if the defect is being approved but is not being exported to the customer’s tracking system, the TTL can insert a message to denote that.- The TTL needs to communicate directly with the customer about this issue, such as to provide clarifying information that you may have discovered after your initial recommendation was provided or to respond to a request or question from a customer, as examples.

- Direct Email: uTest Project and Community Managers place a high level of trust in our TTL community. Occasionally TTLs will be given access to confidential and/or proprietary information including tester email address. TTLs must ensure they treat this confidential information with the upmost sensitivity.

NOTE: If you are emailing more than one tester at a time you must place email addresses into the BCC field. This will ensure that tester email addresses remain hidden from fellow community members.

- Chat: Test cycles typically have real-time chat enabled, which allows TTLs to communicate with testers, customers, and project managers in real time. There are three components of chat: Questions about Scope, Announcements and Threaded/Topic Conversations.

- Triaging Issues

If possible, triage reports as they come in (or in short intervals of time) to prevent a pileup of work. Doing so allows the TTL to easily spot duplicates and potentially identify areas of the application that may need further clarification from the customer (in these cases, contact the PM immediately). Additionally, testers will appreciate timely triaging to help direct them where to focus their testing efforts for subsequent testing. There are two levels of permissions for TTLs: Basic and Approval. The Basic level allows the TTL to send an approval recommendation for how an issue should be triaged, while Approval allows the TTL to effectively triage as a customer or PM. Basic-level permissions require the TTL to complete several pre- determined dropdowns that provide more information to the customer and PM – including whether the issue is valid, in-scope, reproducible, and a duplicate of a previously-reported issue (hereby labeled the TTL Recommendations Workflow). Please consider the following items in triaging issues:- Triage fairly and consistently: The age-old question is whether to offer testers a 2nd chance when they do not follow instructions, or use a ‘tough love’ approach that will signify the importance of high quality with every single interaction. In an effort to save time and resources, our preference is to offer the ‘tough love’ approach, unless the report is salvageable and could be valuable for the customer. Therefore bugs that violate the customer specified bug templates should be rejected as “Did Not Follow Instructions”, while high-quality bugs and reports that have minor mistakes can be salvaged using “Request More Info” tools (more on that below). Testers can discard their bugs up until the TTL triages their bugs, including sending it to the “Request More Info” state.

- Bug Templates – Is the report in the proper format (if the customer asked for a specific format)?

- Confirm that the bug title contains the appropriate information.

- Confirm that the steps to reproduce are clearly documented as required by the customer or development. Testers should be urged to number their steps to make it easier on the customer to read and reproduce.

- Confirm Expected Results and Actual Results are documented clearly and provide factual information.

- Confirm that all appropriate attachments are included as required.

- Confirm that all the additional environment information is included.

- Confirm that all other customer specific format requirements are met.

- Confirm that the bug is classified appropriately.

- Bug Reproducibility – Is there sufficient evidence supplied in the bug report?

- i. When possible, TTLs should attempt to reproduce each submitted issue. In some cases the TTL is not able to try to reproduce a defect before triaging it due to device or time constraints. In these instances, the TTL should ensure the tester has provided sufficient proof that it happened during their testing (logs, screenshots, videos, clear and complete steps, etc.).

- ii. If the TTL is unable to reproduce the issue on the same device/OS as the tester, they may consider asking the tester to verify the issue is still happening to verify the steps written are full and complete. They may have either missed a step or the issue may have corrected itself. Given the bug has adequate information to verify the tester was seeing the issue at the time it was reported, non-reproducibility should not be a reason to reject a tester’s issue before the customer has reviewed it.

- iii. If there is missing information or more clarification is required from a tester, the TTL can push a bug report to the “Request More Info” status, which shows that the bug report was reviewed but requires a response from the tester.

Note: Sending reports into the “Request More Info” status automatically adds a note to the Tester Messenger and prevents testers from discarding these bugs. However, it is possible to visually note when a tester has responded through the Platform UI and through email notification as the status of the bug returns to “New”. In addition, this status assists the testers when they see their bug change status. Testers should be reminded to press “Send Requested Info” button. Please note that if the tester does not respond before the cycle closes, then their bug will be automatically rejected.

- d. Bug Classification – Is the severity and bug type of the issue appropriate?

- i. If unsure, clarify with the PM about severity and value expectations. For example, what do they consider “Critical” vs. “Low”?

- ii. If the Severity is not appropriate, send the tester a message asking them to update it, or the TTL can update it themselves if they have the appropriate permissions in the platform. TTLs can also leave a message for the customer’s Test Manager to make the update when they are approving bugs.

- iii. If the type (functional, UI, technical) is not appropriate, send the tester a message asking them to update it, or the TTL can update it themselves if they have the appropriate permissions in the platform. TTLs can also leave a Customer Message for the customer’s Test Manager to make the update when they are approving bugs.

- e. Duplicate Bugs – Is the issue a duplicate of a previously-submitted issue?

- i. If you believe it is, reject the issue as a duplicate. Be sure to cite the bug report # that the rejected issue is a duplicate of. The time stamp for each defect entered into the platform is critical. When deciding which defect is a duplicate and which defect to approve, bugs reported earliest take precedence.

- ii. If the customer does not want the TTL to do any actual approvals or rejections, the TTL can recommend that the defect be rejected as reason Duplicate and provide the # of the original defect for reference.

- f. Out of Scope – Does the bug appear to be in scope?

- i. The scope is determined by the customer and should be clearly defined in the Scope and Instructions provided by the PM.

- ii. If the bug is not in scope, recommend rejection using “out of scope” as the reason.

- iii. If the bug is not in scope and the TTL has the ability to approve and reject, then simply reject the bug using the “out of scope” reason.

- g. Bug Validity – Does the bug appear to be valid?

- i. Most submitted defect reports will be clearly valid or invalid for one of the reasons above, but some will need further consideration. It may be worthwhile to try to reproduce anything you are in doubt of, to get a clearer idea of what issue is actually being reported.

- h. Bug Approvals – if the TTL has the ability to approve bugs, they should be very careful that they approve the bug at the proper value. Do not accidentally approve a bug as Exceptional when it should be Very or Somewhat valuable.

- i. Customer Approvals – How to deal with the reported issue that is a valid defect from a tester standpoint but not a valid issue from the customer’s perspective?

- i. In this scenario, there are two actions that the TTL can take, depending on the individual circumstances:

- i) Reject (or recommend rejection) as Working as Intended/Designed. This reason does not negatively impact the tester’s rating but they also will not get paid for the defect.

- ii) If applicable, Approve (or recommend approval of) the defect but leave a Customer Note that indicates that it should not be imported into the customer’s tracking system. Defect value is normally set to “Somewhat valuable” for these.

- ii. In the case where the test application is either overly complicated or the customer does not have a complete list of known issues/working as designed, it’s more fair to the testers to approve the defect so they are paid for the time they put in reporting it. Too many “Working as designed” rejections cause testers to shy away from testing and can cause participation rates to drop.

- iii. If the average tester would consider the issue as working as designed, then a rejection is the appropriate strategy. Use your best judgment when making this decision.

- i. In this scenario, there are two actions that the TTL can take, depending on the individual circumstances:

- j. Bug Export – If the bug is valid, does it need to be entered into the customer’s bug tracking system?

- i. If so, and the TTL will be handling the bug tracking system import, there are two ways this can be done.

- i) If the account is set up for it, you can use the “Export to Tracking” button on the bug report in the platform to automatically move the report into the customer’s system. This functionality is customer facing. If a TTL is given an account to access customer site they must be aware that they should not use that account to triage bugs. TTLs should only use their tester/TTL account to triage issues. Under no circumstances should TTLs approve their own bugs.

- ii) If the account is not set up for it, you can manually copy the bug report from the uTest JIRA system to the customer’s bug tracking system. The most efficient way to do this is to click the Copy link at the top of the defect screen in the platform and paste it into WordPad, then copy the sections you need into the corresponding sections in the customer’s tracking tool (JIRA/Team Track/etc.).

- i. If so, and the TTL will be handling the bug tracking system import, there are two ways this can be done.

- Triaging Test Cases

Depending on the test cycle and PM, TTLs may be responsible for approving or rejecting Test Cases. TTLs should always open each Test Case submission and check for all requested materials before choosing to Approve or Reject. Please consider the following points in triaging test cases:- a. Did the tester include all requested documents or information with the Pass/Fail verdict?

- i. If attachments were requested, confirm that there actually is an attachment. Then be sure to open them and verify they are in the correct format and contain the necessary information. If multiple submissions were made by a single tester, compare these documents across the various completed test cases to verify they are original and not simply duplicates with different titles and dates.

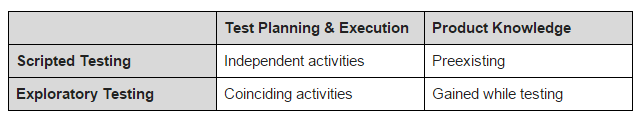

- ii. If the tester was asked to do exploratory testing, make sure the exploratory notes are filled out completely and cover a sufficient amount of testing time dependent on the expectation set in the overview or based on the payout (i.e. a $10 test case will probably have a lower expectation of work than a $30 test case).

- b. Were the testers required to sign up (usually via an external document) for a limited number of test cases? If so, check to be sure the tester’s submission you are approving is slotted for a test case and that the device/browser/etc. details match.

- i. If the testers were required to sign up, were they instructed to only fill a specific number of slots? Keep an eye on testers who try to reserve more than their share of test case slots.

- a. Did the tester include all requested documents or information with the Pass/Fail verdict?

- Bug Fix Verification

If the TTL is working with a customer who either has bug tracking integration with our platform or for whom you are manually transferring our defects, you may also be responsible for identifying the need for (and possibly kicking off) Bug Fix Verification (BFV) cycles. Clarify with the PM who will be responsible for BFV management before the test cycle starts. Keep an eye on the fix statuses of defects that have been moved from the uTest platform to the customer’s bug tracking system and either manage the BFV cycles in a timely fashion or notify the PM which issues need attention. If a bug is verified fixed by the testers via BFV, be sure to update the status to the corresponding bug in the customer’s tracking system. If the testers find that that the issue is still recurring, report this status in the other system and continue to monitor it there, kicking off another round of verification testing when warranted. Again, be sure to clarify with the PM who will be responsible for BFV management before the test cycle starts. - Disputed Bugs

If a bug is disputed by the tester, the TTL should review the comments and then speak to tester on Tester Messenger to notify the tester that their bug is being reviewed. If the TTL has control of rejections and approvals, they can determine a final status. If rejected, the bug changes to Under Review for the PM to make a final determination. If the TTL made a mistake in the initial triage of the bug, they can recommend to the PM that the dispute be resolved and the bug approved. - TTL Testing on Test Cycles

TTLs should discuss whether they can test on the same test cycle that they are TTLing with the PM for that test cycle before launch. If the PM agrees that the TTL can test on the test cycle, then the TTL should notify the testers through the welcome message in chat to reduce confusion for the testers.

Expectations and Escalations

The following list contains the expectations and escalation paths specific to all TTLs. The tester Code of Conduct applies in all cases for all testers and TTLs.

- Expectations: TTLs are expected to…

- Respond as quickly as possible to questions in chat for the test cycle. The availability schedule for the TTL and the PM should be listed in the Scope and Instructions. While testers typically expect chat responses within an hour of submission, TTLs should attempt to respond with 2-4 hours during normal business hours and within 12 hours during the nights and weekends.

- Closely monitor test cycles directly after it launches for questions in chat and issues with bug reports.

- Monitor chat for appropriate tester behavior and escalate any issues that are observed.

- Help reduce the noise created by testers and reduce the number of information requests that PM’s and TM’s must address by gaining a strong understanding of the application and the scope of the test cycle and taking the lead role in answering questions in chat or in the defects.

- Triage defects and test cases during the test cycle. Feedback (including approvals, rejections, or requests for more information) to testers during the test cycle can result in more detailed information in the bug reports and higher quality bug reports and test cases available to the customer. This increase in response to testers can assist in improving the overall quality for subsequent bugs in the current test cycles and future test cycles.

- Provide feedback to testers based on their knowledge of the test cycle, the application under test, the customer, the devices under test, and the expectations listed by the PM prior to test cycle launch. It is understood that this information may change test cycle to test cycle or even within a single test cycle.

- TTLs should provide feedback to testers when expectations are not met in defects, test cases, and reviews. They may also provide feedback for exceptional work.

- Be flexible, very flexible.

NOTE: If a TTL is unable to commit to the expectations of a particular test cycle they must give the Project Manager sufficient notice so that the role can be successfully backfilled. Sufficient notice will vary by test cycle, at the very least a TTL must give 48 hour notice that they will be unable to perform the work required. However, if there is a longer on-ramping time associated with a cycle, then the TTL should inform the PM two weeks prior to exiting the role of TTL.

- Escalations:

- For any issues dealing with the test cycle, the TTL escalates to the primary Project Manager first. If there’s not a response within 24 hours for normal issues or 12 hours for urgent issues, then the TTL should send an email to PM.Escalate@utest.com with a subject line in the following format – [TTL Escalation for Customer Name and Test Cycle ID#] and details on the issue in the body of the message. A TTL should only directly contact the customer if permission has been granted by the Project Manager, either at the project or test cycle level.

Example 1: Testers record questions in chat about the fact that there is no review tab active for the test cycle, though the scope refers to customer reviews when testing is completed.

Example 2: For an e-commerce site, testers are asking whether the entire shopping cart function is out of scope or only the final purchase step. - Any issues with testers behavior, Code of Conduct infractions, or otherwise should be escalated to CM with notification to the PM.

Example 1: Tester discusses test case pay out rates in chat.

Example 2: Tester disputes rejected bugs multiple times.

Example 3: Tester misuses slotting spreadsheets. - As mentioned above, if a TTL is unable to commit to the expectations of a particular test cycle they must give the Project Manager sufficient notice so that the role can be successfully backfilled. Sufficient notice will vary by test cycle, at the very least a TTL must give 48 hour notice that they will be unable to perform the work required. However, if there is a longer on- ramping time associated with a cycle, then the TTL should inform the PM two weeks prior to exiting the role of TTL.

- For any issues dealing with the test cycle, the TTL escalates to the primary Project Manager first. If there’s not a response within 24 hours for normal issues or 12 hours for urgent issues, then the TTL should send an email to PM.Escalate@utest.com with a subject line in the following format – [TTL Escalation for Customer Name and Test Cycle ID#] and details on the issue in the body of the message. A TTL should only directly contact the customer if permission has been granted by the Project Manager, either at the project or test cycle level.

TTL Bill of Rights

- Receive notification of test cycle assignments prior to launch of the test cycle

- Receive anticipated scope of work prior to TTL accepting each TTL assignment

- Receive information about anticipated payout prior to TTL accepting each TTL assignment

- Receive notification from the PM prior to the launch of the next test cycle if PM decides to swap TTLs for any reason

- Receive a response from the PM within 24 hours for normal issues or 12 hours for urgent issues during the following timeframe: 8am – 8pm US Eastern time. If you don’t hear back, you may email to PM.Escalate@utest.com with the customer name and test cycle ID in the subject line

- Expectation that testers will contact TTLs through the platform only, not through any other method like Skype, IM, Google+, etc.

uTest Business Model

For TTLs who have not experienced uTest for a long time, here is a brief outline of uTest’s business model. This knowledge should put things into perspective when you have outstanding questions. However, the Community Management Team is always available to address any open questions about the uTest business model and how it pertains to any TTL assignments – past, present, or future.

The uTest business model is built on these Customer Expectations – that uTest:

- Provides consultation for test strategy, test cycle configuration, and test cycle optimization to achieve high-quality results

- Provides various encapsulated industry and domain-level knowledge and experience

- Provides testing expertise and diverse testing coverage

- Provides high value defect reports

- Responds quickly to customer and testers alike

- Assures a low level of noise during each test cycle

Basic Business Concept:

- Larger customers generally engage uTest for longer timeframes to ensure continuity of testing coverage and expertise. In general, Project Managers that cover larger customers have fewer total customers, as this allows for deeper focus on customers who generally have greater needs.

- Smaller customers may engage uTest for a variety of time periods, whether it’s several test cycles or annual contracts. In general, Project Managers that cover smaller customers will have more customers, which generally results in slower response times.

Key Message for TTLs: No matter the size of the customer or the size of the test cycle, every customer should be delighted with the test cycle experience from activation to close.

TTLs and SDLC

A basic understanding of the Software Development Lifecycle (SDLC) is very useful for every TTL. The more confidently that a TTL understands the different stages of the SDLC the better that the TTL can prepare and set appropriate expectations for the testers on the test cycle. In general, applications that are tested earlier in the development cycle (like an alpha release) have code that is relatively untested, and testers can expect to find more bugs. On the other hand, applications that are being tested later in the development cycle will have more stable code with fewer bugs that are more difficult to determine and more critical to the live release of the software. Please refer to the Wikipedia definition of the SDLC to begin your research on this topic.

Conclusion

The term TTL is a uTest-derived acronym that stands for ‘Test Team Lead.’ In a Test Cycle, a TTL is the bridge that connects Project Management, Testers, Customers, and Community Management and assists in increasing the uTest value to the customer and the testers.

TTLs have 3 primary tasks in a test cycle:

- Platform communications

- Triaging Bugs

- Triaging Test Cases

Be sure to confidently understand the standards and expectations listed in this handbook before engaging in any test team leadership activities. Because it cannot be repeated enough, the goal for the TTL is to increase the value that uTest brings to the customer by maximizing the output and minimizing the noise level. As a TTL, we are placing a great deal of trust in your technical and leadership abilities to help manage each test cycle. Therefore, please do not hesitate to reach out to the CM Team if you do not believe we are providing appropriate training or expectations for all parties involved in order for you to become successful and for our customers to extract the highest value from uTest. Lastly, feel free to reach out to the uTest Community Management Team if you have any questions or concerns with current or recent TTL assignments.

Recommended Next Steps

Once you feel comfortable with the information contained within the TTL Handbook you may request access to the TTL Entry Level Exam by emailing TTL@utest.com.

- Participate in on-line training sessions as they are available.

- Further training may be required:

- Rules about exporting bugs:

- CSV

- Bug tracking systems

- Communications with PMs

- Communications with CM

Addendum

Chat Examples:

Example: — New Chat Topic – Welcome message:

When the test cycle launches, the TTL should introduce themselves as the Test Team Lead for this test cycle in the Chat Topics. This notification establishes a point of contact testers can reach out to with their questions and observations. A generic welcome message is provided below. Amend as appropriate:

Welcome to this<Project name> cycle. I will be your Test Team Lead on this project. At any time, please let me know if you have any questions or concerns. I will try and find an answer for you as soon as possible.

Please ensure that you read the scope and instructions carefully. Check the Out of Scope section and known issues list before starting your testing effort.

In addition, make note of other bugs raised during the cycle to assure that you do not raise duplicate bugs.

When raising issues, please ensure that the bug titles and descriptions are of the highest quality. They should be descriptive and clear. The bug title in particular should be descriptive enough to explain the area and issue so it is easy to spot when going through the bug list.

Please be sure to include all mandatory information for the bug as required.

Thanks a lot and happy testing

<TTL’s Name>